e6. compositional semantics from the ground up

phrases, functions, and projection

Let’s say we are a speaker who wants to say Brutus killed Caesar.1 This meaning has two entities (Brutus and Caesar) and one event (killed). We need to put them together somehow.

We saw in episode three that lambda calculus was a useful tool for representing logical form. Lambda calculus, like all forms of function notation, contains functions and variables, which need to combine with each other in order to form mathematical expressions that can be evaluated as true or false. Sound familiar?

We can represent events as functions, and entities as variables:

Brutus killed Caesar.

killed(Brutus, Caesar)

If we want to express this logical form as a sentence—to say it to someone else—we have to first transform it into a syntax tree.

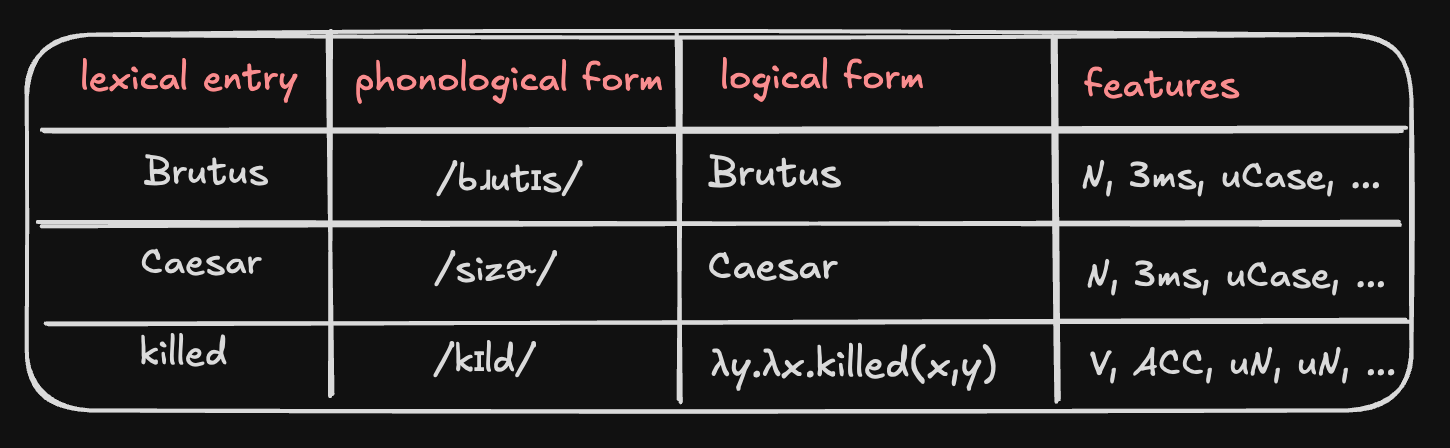

First we find all of our entities and events in the lexicon:

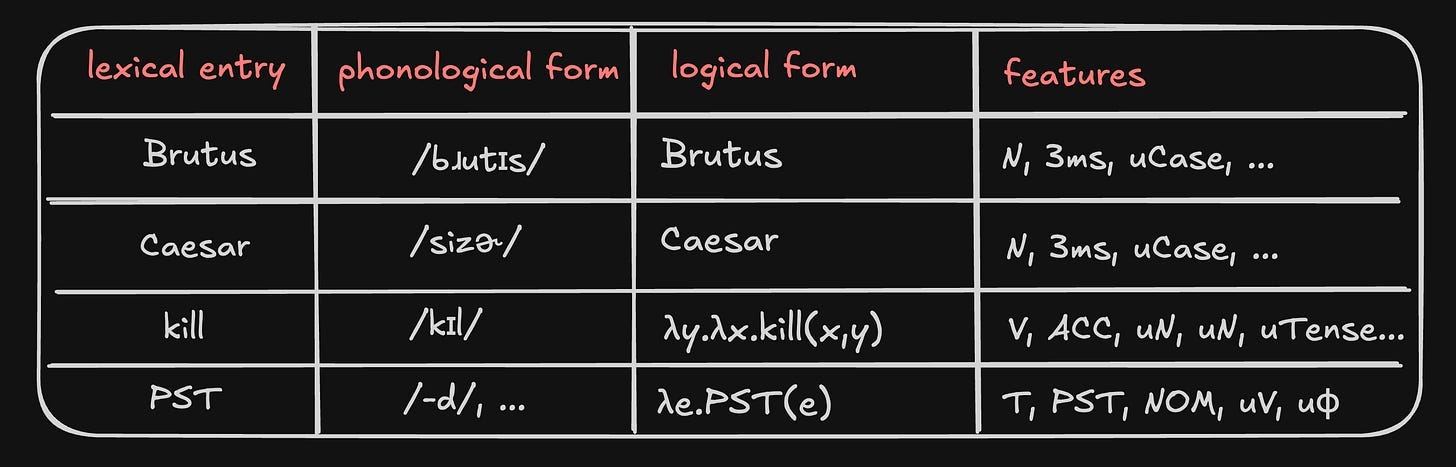

Actually, if you remember from episode four, verb roots are kept in the lexicon without tense, and tense markers are added in separately. So maybe our logical form looks more like this:

Brutus killed Caesar.

PST(kill(Brutus, Caesar))

This way we don’t need a separate lexical entry for kill, killed, kills, etc. Our lexicon now looks like this:

Note that the phonological forms of bound morphemes like the tense marker are a bit more complicated. We’ll talk about them another time.

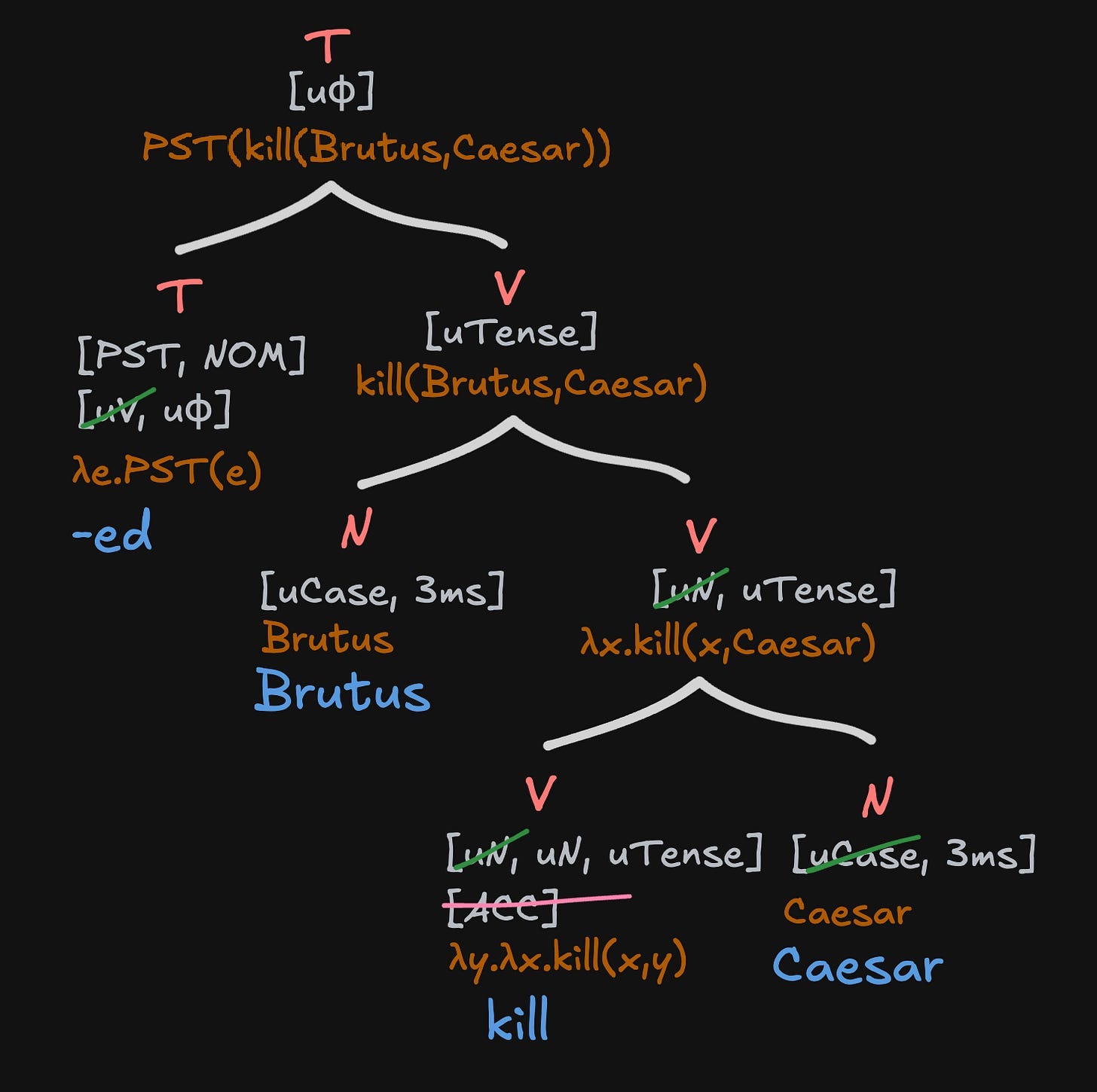

Now that we found all the necessary lexical entries (searching them up by their logical forms), it’s time to put them together. Just like with functions in math, we need to start in the most parentheses-entrenched spot. We need to give the inputs Brutus and Caesar to kill().

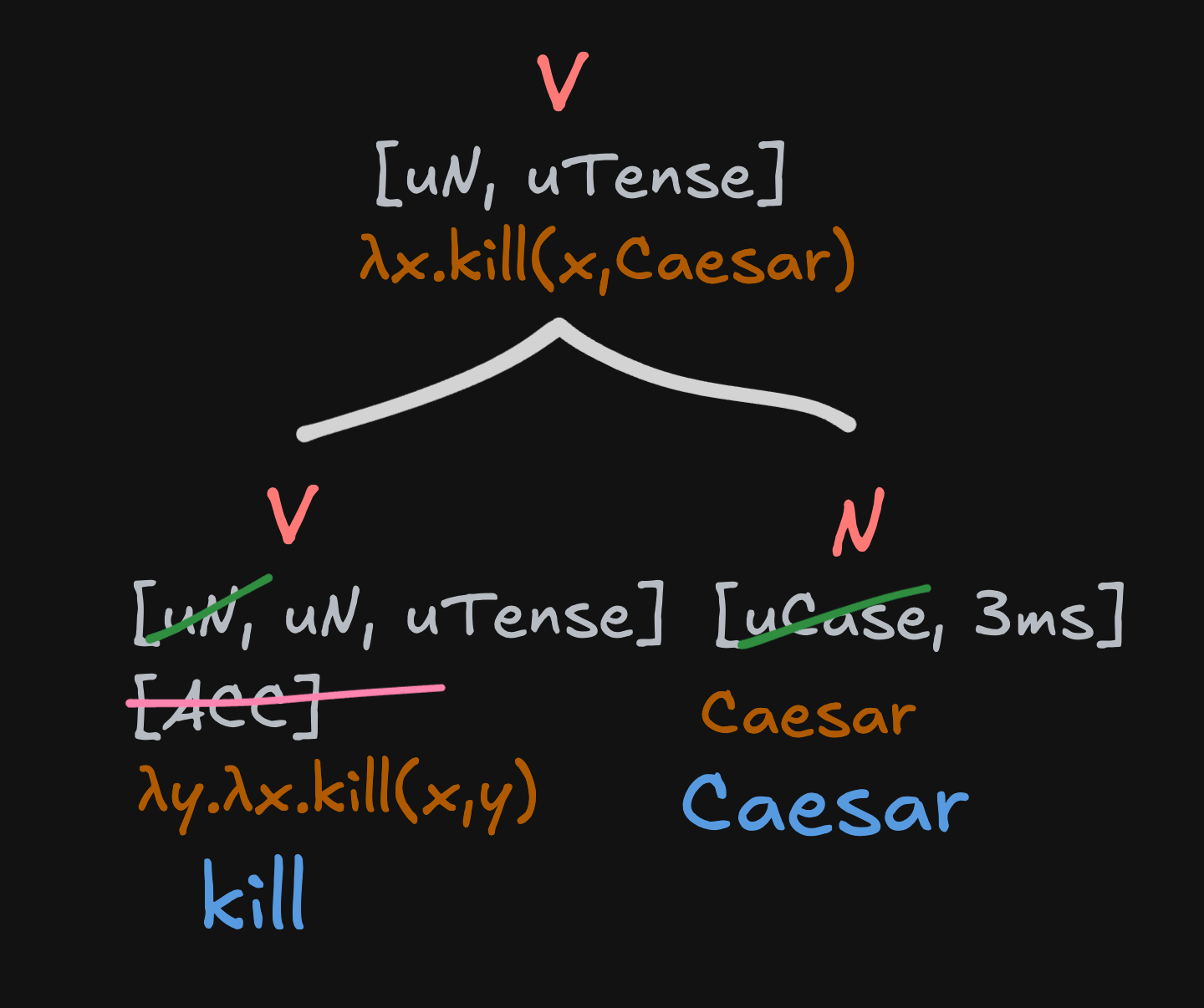

We merge Caesar with kill() first, simply because it’s the latter input:2

The uninterpretable case feature on Caesar is interpreted by the interpretable accusative case feature on kill, and one of the [uN] features on kill is interpreted by Caesar’s [N] feature.

What’s new here are the brownish orange logical forms. Especially the one up top, at the merged node. But it’s really simple—all we did is plug the entity/variable Caesar into the event/function λy.λx.kill(x,y). Remember that λx.y means ‘take x, and return y’. So λy.λx.kill(x,y) means ‘take y, and return λx.kill(x,y)’. And that’s exactly what’s happening! The function is taking Caesar and returning (in the higher node) λx.kill(x,Caesar). If this is hard to wrap your head around, just try doing it yourself. Basically, every time you merge two syntactic objects (nodes), you plug one of them into the other.

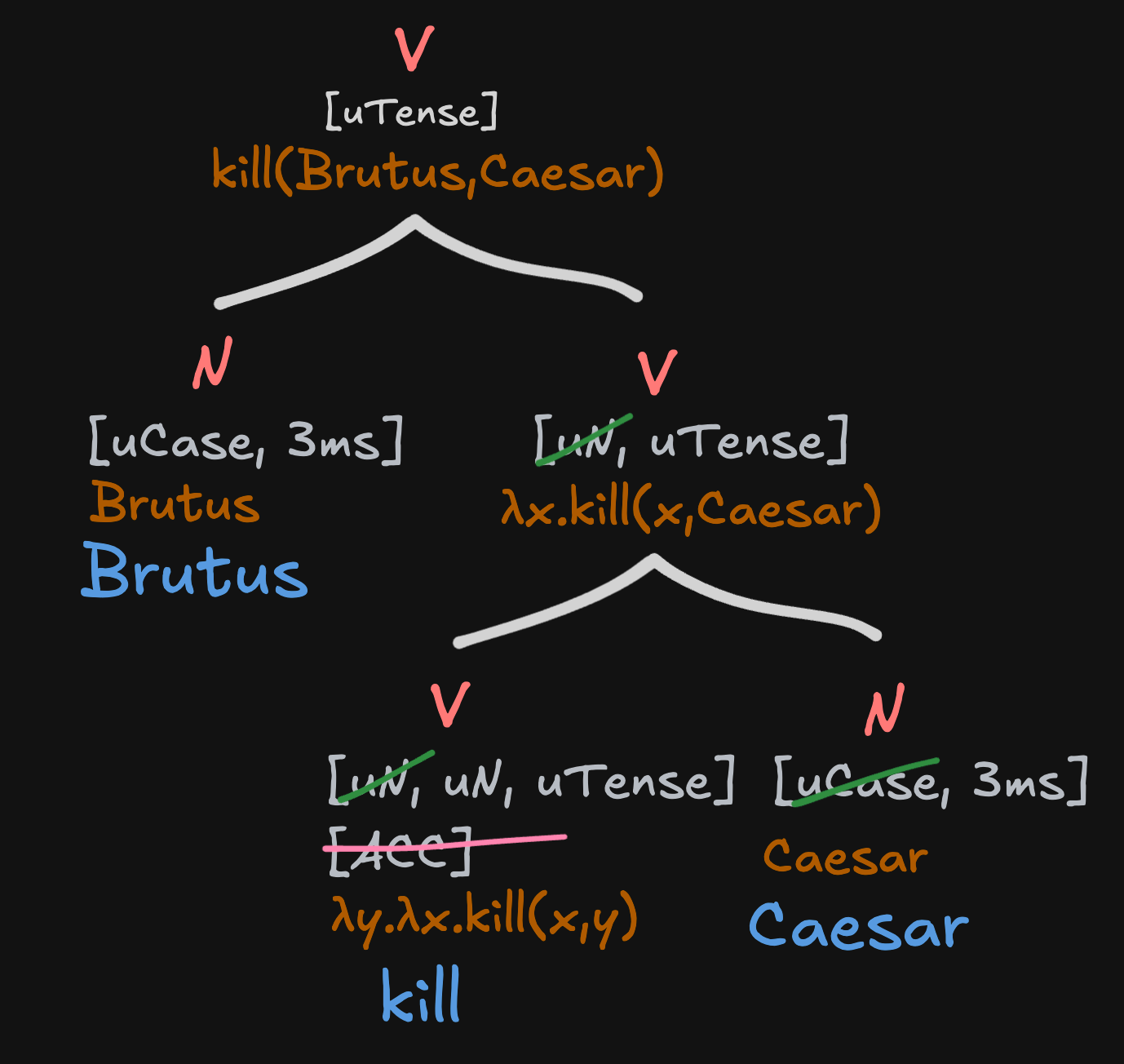

So next let’s merge Brutus, our subject.

And now the tense marker, like we did in episode four.

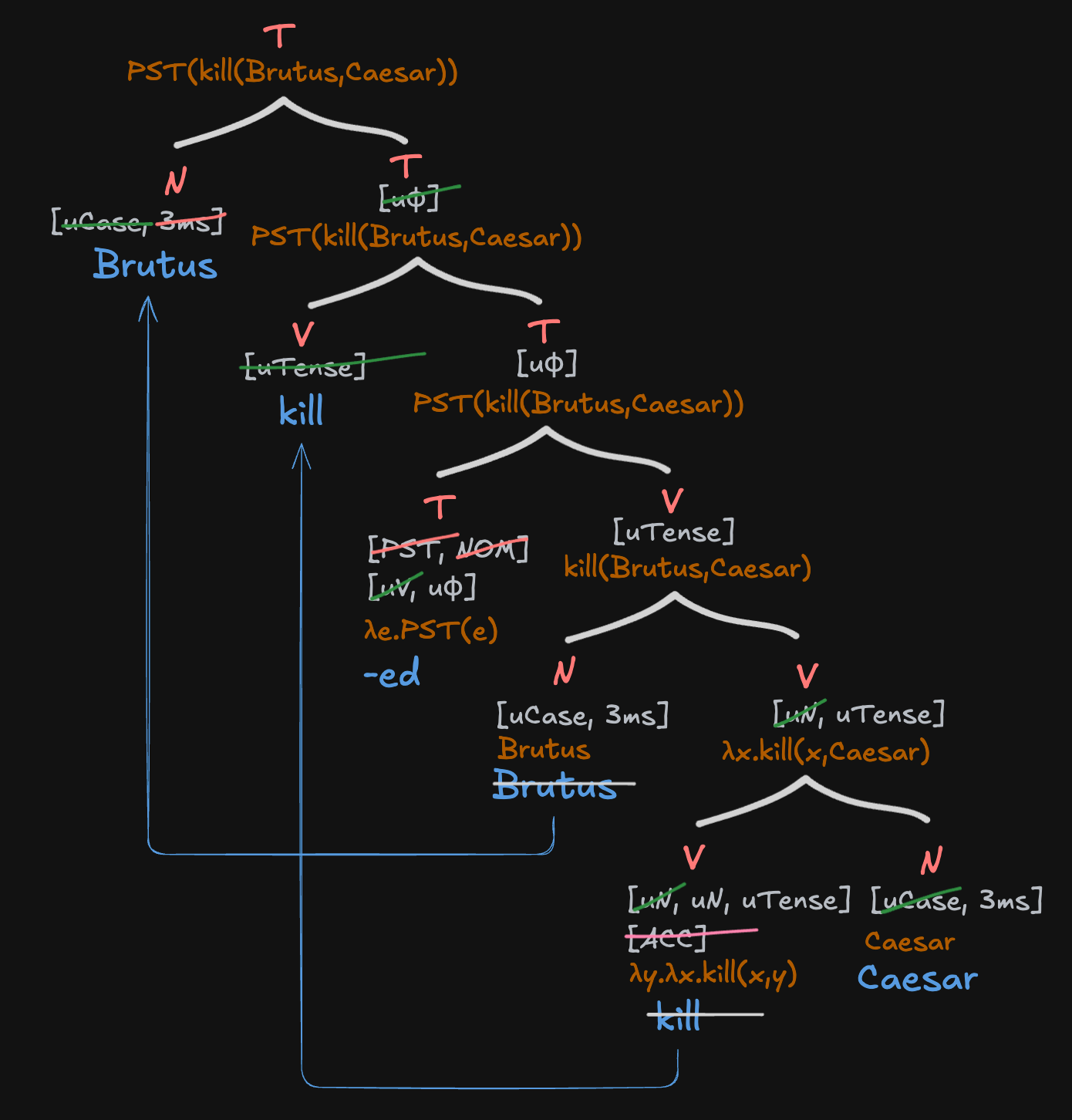

Finally, we’ll move the verb up to get tense, and then the subject Brutus to get case.

We’ll assume for now that moved elements don’t bring their logical forms with them, but you should know that this is something we’re going to come back to later.

One thing you might notice about this derivation is that the labelling and projection directly corresponds to which nodes are inputted into other nodes. Every parent node has one daughter who is semantically ‘inputted’ into the other daughter. The first daughter we’ll call the ‘argument(ative daughter)’ and the second we’ll call the ‘function(al daughter).’ Every parent node derives its features from its functional daughter. The features of the argumentative daughter ARE LOST (edit: only in terms of projection—they aren’t deleted from the argumentative daughter itself).

This answers our first running question!

Why does cat project its features in big cat, but drink projects its features in drink lemonade?

Because cat in big cat is the function, and big is the argument, and drink in drink lemonade is the function, and lemonade is the argument!

This makes sense intuitively. In a way, it should be the null hypothesis. The argument’s logical form is always subsumed into the function’s logical form—all we are saying now is that all of other its features are similarly subsumed!

The Feature Projection Rule: functions project their features; arguments don’t.3

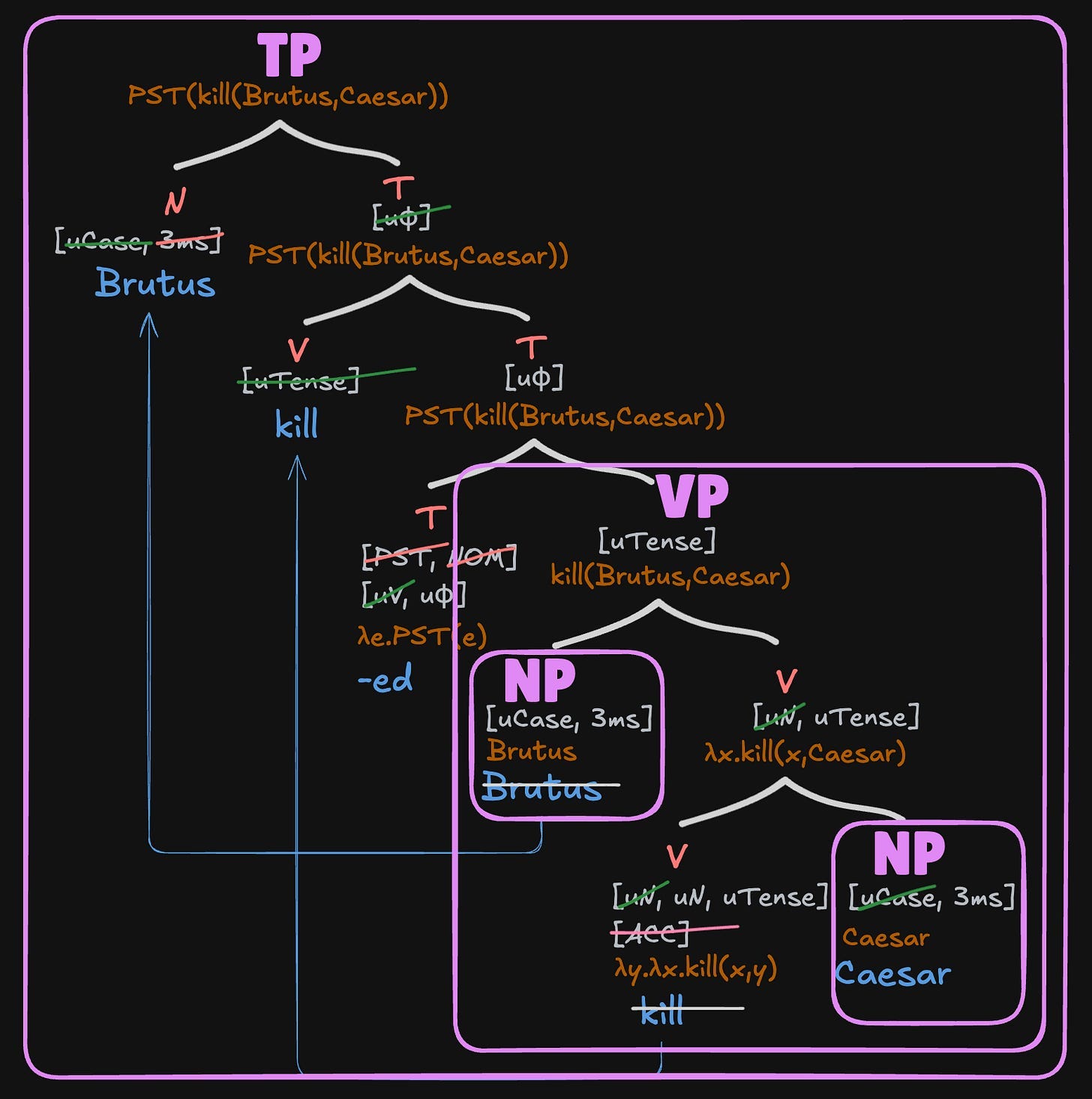

I’m also going to take this opportunity to introduce a few pretty important pieces of terminology we’ll be using throughout the rest of this blog. A phrase, or maximal projection, is a group of a terminal node, every neighboring node which shares its label, and every node below all of these nodes. The head or minimal projection of any given phrase is just the terminal node defining it.

Here’s a guide to the phrases in Brutus killed Caesar:

We write XP at the top of each phrase to indicate that it is the maximal projection of a head of category X.

Two more quick pieces of terminology:

The complement of a head/phrase is its first argument.

A specifier of a head/phrase is one of its other arguments.

In this framework we can also define head in the following way:

The head of a phrase is its function.

conclusion

I hope this was a good introduction to basic compositional semantics. The key takeaway from this episode and the last one should be that compositional lambda calculus can be used in combination with Merge to create hierarchical logical structures, which are – as least as we've defined them thus far – equivalent to our intuitions about conceptual structure.4

Also, we learned about heads and phrases, complements and specifiers, and answered our first Running Question with the Feature Projection Rule!

We’re actually going to take a little bit of a detour in the next few episodes and talk about the evolution of compositionality, but we’ll get back to more complex compositional semantics right after that!

note that this is *a* theory. not the most well researched theory or the most accurate theory. just the one david came up with in his backyard for these reasons. david might sound confident but he is not. however, if you use his ideas, please cite him!! check out the table of contents here.

I am not a Romanophile; this is just a really common example sentence in semantics.

This is an arbitrary rule; what matters is that Caesar is merged first, rather than the latter input being merged first—we could just as easily say the former input is merged first, and represent kill as λx.λy.kill(x,y). Why do we need to merge Caesar first? Because there's a lot of evidence that objects come ‘lower’ in the tree than subjects cross-linguistically – for example, subjects bind objects and not the other way around (we'll get to binding later, but basically you can say Caesar killed himself and not Himself killed Caesar), and objects are much more likely to form idioms with verbs.

This is one of the things I really just came up with myself, but this is such well-tread territory that I’m sure either (a) there’s good cross-linguistic evidence that refutes this or (b) someone else has also come up with this before and I just don’t know it. Would love to know if so! I also may revise this slightly later.

Importantly, this does not imply anything about how conceptual structure is represented in our heads with neurons.